València (Spain) 13 MAR 2026 6:00. Levante EMV.

Right now, we are not facing a classic authoritarian regime: we still vote; we have parliaments, courts, fundamental rights, and social peace; yet power is changing hands. The question is, who really governs when algorithms decide what is visible and what is not?

https://www.levante-emv.com/opinion/2026/03/13/nou-absolutisme-no-porta-corona-127867489.html

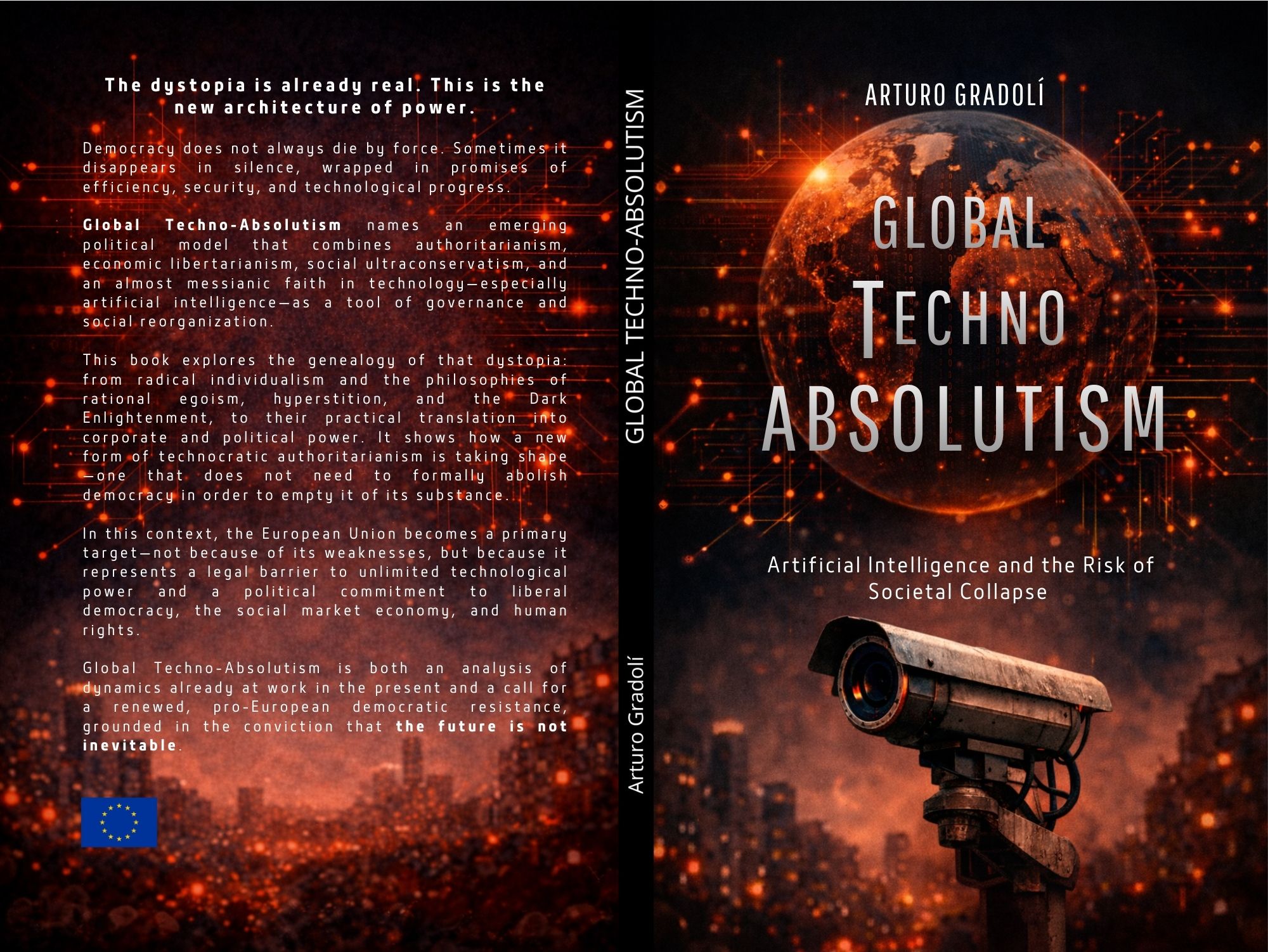

“Democracy does not always die by force; sometimes it disappears in silence.”

This sentence opens the book Global Techno-Absolutism and serves as both a diagnosis and a warning.

For decades, in Europe we have assumed that democracy could only disappear through upheavals such as coups d’état, constitutional suspensions, or military revolts against institutions. But what the book suggests is far more unsettling: what if democracy is not being destroyed, but emptied?

At present, we are not living under a classic authoritarian regime: we still vote; we have parliaments, courts, fundamental rights, and social peace; but power is shifting. Structural decisions increasingly depend on global technological environments that are not subject to democratic control, such as the visibility of information, the hierarchy of content, or digital infrastructures. So, the question arises: who really governs when algorithms decide what is visible and what is not?

And why Techno-Absolutism? When we think of absolutism, we evoke the 17th and 18th centuries—Louis XIV proclaiming, “L’État, c’est moi.” Power concentrated in a single executive will, without effective checks and balances. Today, however, we are beginning to live under a new form of absolutism. We do not see the face of a monarch. It does not need a crown. It does not live in Versailles or anywhere in particular. Artificial intelligence systems, social networks, and digital infrastructures managed by technocratic elites with extraordinary economic and decision-making capacity concentrate power. If once the king could do everything, now we might say that algorithms decide for everyone. There is no sovereign issuing decrees, but there is a structural shift of power outside the traditional circuit of institutional representation, as algorithms and AI systems increasingly influence decision-making processes that affect society at large. And that is deeply political.

The comparison with George Orwell’s novel 1984 is inevitable, but in Orwell’s dystopia power is visible, centralized, and repressive. Today, power is diffuse, technological, and seemingly neutral. It does not systematically prohibit it. It does not need massive censorship: it guides, filters, and prioritizes.

Algorithms know our preferences, beliefs, age, interests, and even our mood. Based on these factors, they select the information we receive first, what is diluted, and what is made viral. Whoever controls visibility shapes the public sphere. Such control is not a power that tells us what to think; it is a power that conditions what is available for us to think about. And when someone controls the criteria of prioritization, they are intervening in democracy before democracy can react. Consider, for example, an electoral campaign, a health crisis, or a climate emergency. If information circulates according to opaque commercial criteria, who is really setting the agenda?

Therefore, we are not dealing with a secret plan coordinated by dark minds, but rather with a historic and transcendental convergence of power.

During the 20th century, ideas such as radical individualism, distrust of liberal democracy, economic ultraliberalism, and absolute faith in technology were scattered. The influence of philosopher Ayn Rand is crucial here, as she established the idea that the exceptional individual stands above society, the state is an obstacle, and equality is a burden. Rand defended an extremely radical view: the individual is the only fully legitimate moral subject, so rational selfishness and extreme inequality are not defects but virtues.

Today’s technological elites have grown intellectually within this ideological framework. This perspective helps explain figures such as Curtis Yarvin and Nick Land, associated with currents like post-democracy, accelerationism, or the so-called Dark Enlightenment.

We are facing a situation with a clear intentional dimension, expressed in the spread of a markedly authoritarian, even anarcho-capitalist ideology, combining economic ultraliberalism, moral conservatism, and an almost messianic faith in technology—especially artificial intelligence—as a central instrument of governance, control, and social reorganization.

Actors who control global digital infrastructures put these ideas into practice, transforming them from marginal theories into governance.

“Europe is the last regulatory wall and plays a central role in opposing techno-absolutism.”

In the current scenario, Europe serves as the final regulatory barrier, playing a pivotal role in resisting techno-absolutism by upholding the rule of law, maintaining a separation of powers, safeguarding fundamental rights, and regulating the market. From the General Data Protection Regulation to legislation on digital markets and artificial intelligence, Europe seeks to establish a fundamental idea: technology is not above the law. However, from the techno-absolutist perspective, these limits are considered obstacles, since politics should kneel and be left in the hands of experts or technological corporations, both private and public. In this context, if Europe gives up regulating, it will not only lose economic competitiveness but also democratic sovereignty.

All this may seem like a distant, theoretical debate, but it is not—it affects our daily lives. Every day. For instance, a local business may depend on an algorithm for its digital presence, a media outlet might rely on the structure of a global platform to reach readers, and political information can circulate based on unclear ideological criteria. And this is the world of politics, because the issue is not whether we use more or less technology—the issue is who defines the rules and who benefits from them.

The future is not written. But it forces us to ask an essential question: do we want to be citizens or users?

Citizens participate, deliberate, and demand accountability. Users, by contrast, accept terms and conditions. Democracy can adapt and be strengthened by technology—or it can gradually give up space without realizing it, leading to a potential erosion of civic engagement and accountability in governance. Let us repeat: the future is not written by algorithms because it depends on the political decisions we make as a liberal democratic society. Because democracy does not always die by force; sometimes, it simply disappears in silence.

Arturo Gradolí is a PhD in Historical and Social Studies of Science and the author of Global Techno-Absolutism (Artificial Intelligence and the Risk of Social Collapse).